Neural Networks Basics Explained

Neural networks basics form the backbone of modern artificial intelligence and machine learning systems. Today, they power technologies that people use every single day. For example, voice assistants, recommendation engines, and even self-driving cars rely heavily on these systems. Moreover, advanced tools like image recognition and language translation also depend on them.

To begin with, this beginner-friendly guide will help you understand how neural networks actually work. In addition, you will see why they are inspired by the human brain and how they learn from data. As a result, complex AI ideas will feel much simpler and more structured.

Furthermore, we will explore how neural networks evolved over time. You will also learn about different deep learning architectures that power modern AI models. Along with that, we will look at real-world neural network applications in industries such as healthcare, finance, marketing, and technology.

In addition to concepts, this guide will also introduce key tools and training methods used in AI development. Meanwhile, we will also discuss major challenges and limitations. Finally, you will get a clear view of future trends shaping the deep learning guide ecosystem.

Because of this structured approach, you will build a strong foundation in neural networks basics, even if you are completely new to AI. Ultimately, everything will come together to give you a complete and practical understanding of how modern AI systems think and learn.

So, let’s move ahead and start breaking down these concepts step by step.

What Are Neural Networks?

Neural networks basics start with a simple idea. They are computing systems designed to learn from data in a human-like way. Moreover, they fall under machine learning and power many modern AI models today. In simple terms, a neural network helps a machine “learn patterns” instead of following fixed rules.

To understand this better, think about the human brain. The brain uses billions of neurons to process information. Similarly, artificial neural networks use connected nodes to process data. Each connection passes information forward, just like neurons transmit signals in the brain. As a result, machines can make decisions, recognize patterns, and improve over time.

At a basic level, a neural network has three main parts. First, the input layer receives raw data such as images, text, or numbers. Next, the hidden layers process this data step by step. Finally, the output layer gives the final result, such as a prediction or classification. Together, these layers form a structured flow of information.

In addition, you can think of neural networks as “decision-making layers.” For instance, each layer asks a small question about the data. Then, it passes the answer forward for deeper analysis. Because of this layered structure, complex problems become easier to solve.

For example, in spam email detection, the system checks words, patterns, and sender details. If it finds suspicious signals, it marks the email as spam. Similarly, in face recognition, the network identifies shapes, edges, and facial features to match a person correctly.

Therefore, neural networks play a major role in modern AI models. They help systems learn from experience, improve accuracy, and handle real-world tasks efficiently.

History & Evolution of Neural Networks

The neural network history begins in the mid-20th century when scientists first tried to replicate how the human brain learns. During the 1940s and 1950s, early researchers introduced the idea of artificial neurons. Moreover, this concept laid the foundation for modern AI models we use today.

One of the earliest breakthroughs was the Perceptron model, developed in the late 1950s. It was designed to make simple decisions by learning from input data. However, it struggled with complex problems, which limited its real-world use. As a result, interest in neural networks slowed down.

During the 1980s and 1990s, things started improving again. Algorithms like backpropagation helped networks learn more effectively. In addition, researchers discovered better ways to adjust weights and reduce errors. Still, progress remained slow because computers lacked enough processing power.

Unfortunately, this led to periods known as AI winters, when funding and interest dropped significantly. However, research continued quietly in the background.

The real turning point came in the 2010s with the rise of deep learning guide systems. Thanks to powerful GPUs and massive datasets, neural networks became highly effective. Consequently, they started outperforming traditional machine learning methods.

Today, neural networks are at the core of advanced AI models used by companies like Google, Meta, and OpenAI. They power everything from search engines to chatbots and image recognition systems.

Simple Evolution Timeline (Suggested Visual)

- 1940s–1950s: Artificial neurons introduced

- 1958: Perceptron model developed

- 1980s–1990s: Backpropagation improves learning

- 2000s: Slow progress due to limited computing power

- 2010s–Present: Deep learning revolution begins

Therefore, the evolution of AI shows how persistence and technology together shaped modern neural networks.

How Neural Networks Work (Step-by-Step Breakdown)

Understanding neural networks basics becomes much easier when you see how they actually work step by step. Essentially, neural networks process data through a system of connected units called neurons. Moreover, each neuron plays a small but important role in decision-making.

To begin with, every neural network is made of layers of neurons. These include the input layer, hidden layers, and output layer. As data moves forward, each layer transforms it into more meaningful information.

Now, each neuron works using three key elements: weights, bias, and activation function. First, weights decide how important each input is. In other words, higher weights mean stronger influence on the result. Next, bias helps adjust the output so the model becomes more flexible. Finally, the activation function decides whether the neuron should “activate” or pass the signal forward. Common activation functions include ReLU and Sigmoid, which help handle complex patterns.

After that, the process of forward propagation begins. In this stage, data flows from the input layer through hidden layers and finally reaches the output layer. As a result, the network generates a prediction or decision based on learned patterns.

However, the system does not stop there. Instead, it checks how accurate the prediction is. If there is an error, the network adjusts weights and bias to improve future results. This learning cycle continues repeatedly until the model becomes more accurate.

For example, imagine predicting house prices. The input data may include size, number of rooms, and location. Initially, the model may guess incorrectly. But over time, it learns which features matter more, such as location having higher impact than room count. Consequently, its predictions improve significantly.

Types of Neural Networks in AI Models

Different AI models use different neural networks types depending on the problem they are designed to solve. Moreover, each architecture is optimized for specific tasks such as image recognition, language processing, or sequence prediction. Therefore, understanding these types is a key part of any deep learning guide.

To begin with, the simplest type is the Feedforward Neural Network. In this model, data flows in one direction only—from input to output. There are no loops or backward connections. As a result, it works well for basic tasks like classification and regression problems.

Next, we have Convolutional Neural Networks (CNNs). These are widely used in computer vision tasks. For example, CNNs help identify objects in images, detect faces, and even analyze medical scans. In addition, they automatically extract important features like edges, shapes, and textures, which makes them highly efficient for visual AI models.

Another important type is the Recurrent Neural Network (RNN). Unlike feedforward networks, RNNs are designed to handle sequential data. For instance, they work well with text, speech, and time-series data. Because they remember previous inputs, they are useful in applications like language translation and speech recognition.

Furthermore, modern AI models often use Transformers, which represent a major breakthrough in deep learning. Instead of processing data step by step, transformers analyze entire sequences at once. Consequently, they power advanced systems like chatbots, translation tools, and large language models.

Quick Comparison Suggestion (Visual Table Idea)

- Feedforward → Basic predictions

- CNN → Image and vision tasks

- RNN → Sequence and time-based data

- Transformers → Advanced language understanding

Additionally, each model plays a unique role in real-world neural network applications. For example, CNNs are used in self-driving cars for object detection, while transformers power AI assistants like ChatGPT.

Overall, choosing the right neural network type depends on the problem you want to solve. Therefore, understanding these differences helps in building better and more efficient AI systems.

Key Components of Neural Networks Explained

To understand neural networks basics clearly, you first need to know their core building blocks. A neural network is not random. Instead, it follows a structured design where each component has a specific role in processing data. Moreover, these components work together to transform raw input into meaningful output.

First, the input layer receives raw data. This data can include numbers, images, text, or audio. For example, in image recognition, pixel values enter through the input layer. As a result, this layer acts as the starting point of the entire system.

Next, the data moves into hidden layers. These layers perform most of the computation. In addition, they extract patterns, detect relationships, and refine information step by step. The deeper the network, the more hidden layers it has. Consequently, it can learn more complex features.

Finally, the output layer generates the final prediction. For instance, it may classify an image, predict a price, or identify a category. Therefore, this layer delivers the actual result based on learned patterns.

Along with layers, another important concept is nodes (neurons) and their connections. Each neuron connects to others and carries a weight. These weights decide how important each input is. Moreover, activation functions control whether a neuron should pass information forward or not. This improves decision-making accuracy.

Training Neural Networks

Training is the most important step in neural networks basics because it is where the model actually learns from data. Moreover, this process allows AI models to improve accuracy over time by reducing errors. In simple terms, training teaches the network how to make better predictions.

To begin with, the model makes an initial prediction using input data. Then, it compares this prediction with the actual result using a loss function. This loss function measures how wrong the model is. As a result, it gives feedback on how much improvement is needed.

Next comes backpropagation, which is the core learning mechanism. In this step, the error is sent backward through the network. Consequently, each neuron adjusts its weights to reduce future mistakes. This process helps the model learn from its errors effectively.

After that, gradient descent is used to optimize the learning process. It helps the model find the direction of minimum error. In other words, it slowly updates weights to improve performance step by step. Therefore, the model becomes more accurate with each iteration.

Now, training happens over multiple cycles called epochs. One epoch means the model has seen the entire dataset once. In addition, multiple iterations are often required to achieve good accuracy. The speed at which the model learns is controlled by the learning rate. If it is too high, the model may skip optimal solutions. If it is too low, learning becomes slow.

Key Terms Summary

- Epoch: One full pass of the dataset

- Learning Rate: Controls how fast the model learns

- Optimization: Process of improving accuracy

For example, imagine training an image classifier to identify cats and dogs. Initially, the model may confuse both. However, after many epochs, it learns patterns like ear shape, fur texture, and face structure. Consequently, its accuracy improves significantly.

Overall, this training process is what makes neural networks powerful deep learning systems capable of solving real-world problems efficiently.

Neural Network Applications in Real World

Neural network applications are now deeply integrated into everyday digital systems. Moreover, they power many AI models that people use without even realizing it. From healthcare to entertainment, these systems are transforming how industries operate and make decisions.

To begin with, in healthcare, neural networks help doctors detect diseases from medical images like X-rays and MRIs. For example, AI models can identify early signs of cancer with high accuracy. As a result, diagnosis becomes faster and more reliable.

In finance, neural networks are widely used for fraud detection and risk analysis. In addition, they help predict stock market trends by analyzing large volumes of financial data. Consequently, banks and trading platforms can make smarter decisions.

Moving to e-commerce, platforms like Amazon and Flipkart use neural networks to recommend products. These AI models analyze user behavior, search history, and preferences. Therefore, customers receive personalized shopping suggestions, which improves sales and user experience.

In autonomous vehicles, neural networks play a critical role in object detection and decision-making. For instance, self-driving cars use them to identify pedestrians, traffic signals, and obstacles. This ensures safer navigation on roads.

Similarly, in social media, AI models personalize feeds based on user activity. Platforms like Instagram and Facebook use neural networks to show relevant posts, ads, and videos. As a result, user engagement increases significantly.

Real-Life Examples

- Netflix: Uses neural networks to recommend shows based on viewing history

- Google Translate: Uses AI models to translate languages in real time

Overall, these neural network applications show how deeply AI has become part of modern life. Therefore, understanding them helps us see the real impact of artificial intelligence in the world today.

Tools & Frameworks Used in Neural Networks

To understand neural networks basics in practice, it is important to know the tools that power their development. Moreover, modern AI models are built using powerful frameworks that simplify complex calculations and training processes.

To begin with, TensorFlow is one of the most widely used deep learning tools. It helps developers build, train, and deploy neural networks efficiently. In addition, it supports large-scale machine learning projects used by companies worldwide.

Next, PyTorch is another popular AI framework known for its flexibility. Researchers often prefer it because it is easy to experiment with and debug. As a result, it has become a top choice in academic and industrial AI projects.

Another useful tool is Keras, which provides a simple interface for building neural networks. Therefore, beginners can quickly design and test models without dealing with complex code.

Finally, Google Colab offers free cloud-based computing resources. This means users can train AI models without needing expensive hardware. Moreover, it supports both TensorFlow and PyTorch, making it highly convenient for learners.

Overall, these deep learning tools make it easier to build advanced AI systems and understand real-world neural network applications effectively.

Challenges & Limitations of Neural Networks

Even though neural networks are powerful, they still come with several AI limitations. Moreover, understanding these challenges is important for building better and more reliable AI models.

To begin with, neural networks require large amounts of data to perform well. Without enough data, they often fail to learn meaningful patterns. In addition, collecting and labeling data can be time-consuming and expensive.

Another major challenge is high computational cost. Training deep learning models needs powerful GPUs and significant processing time. As a result, it becomes difficult for beginners or small businesses to work with advanced neural networks.

Furthermore, there is the black-box problem. This means it is often unclear how a neural network makes decisions. Even though it gives accurate results, the internal logic is hard to interpret. Therefore, trust and transparency become issues in critical areas like healthcare and finance.

Lastly, overfitting is a common problem. In this case, the model performs very well on training data but poorly on new, unseen data. Consequently, it fails to generalize effectively in real-world scenarios.

Overall, these neural networks challenges show that while AI is powerful, it still needs careful design and improvement to become more efficient and explainable.

Future of Neural Networks & AI Trends

The future of AI is strongly connected to the evolution of neural networks and faster computing systems. Moreover, as data and technology continue to grow, AI models are becoming more powerful and efficient than ever before.

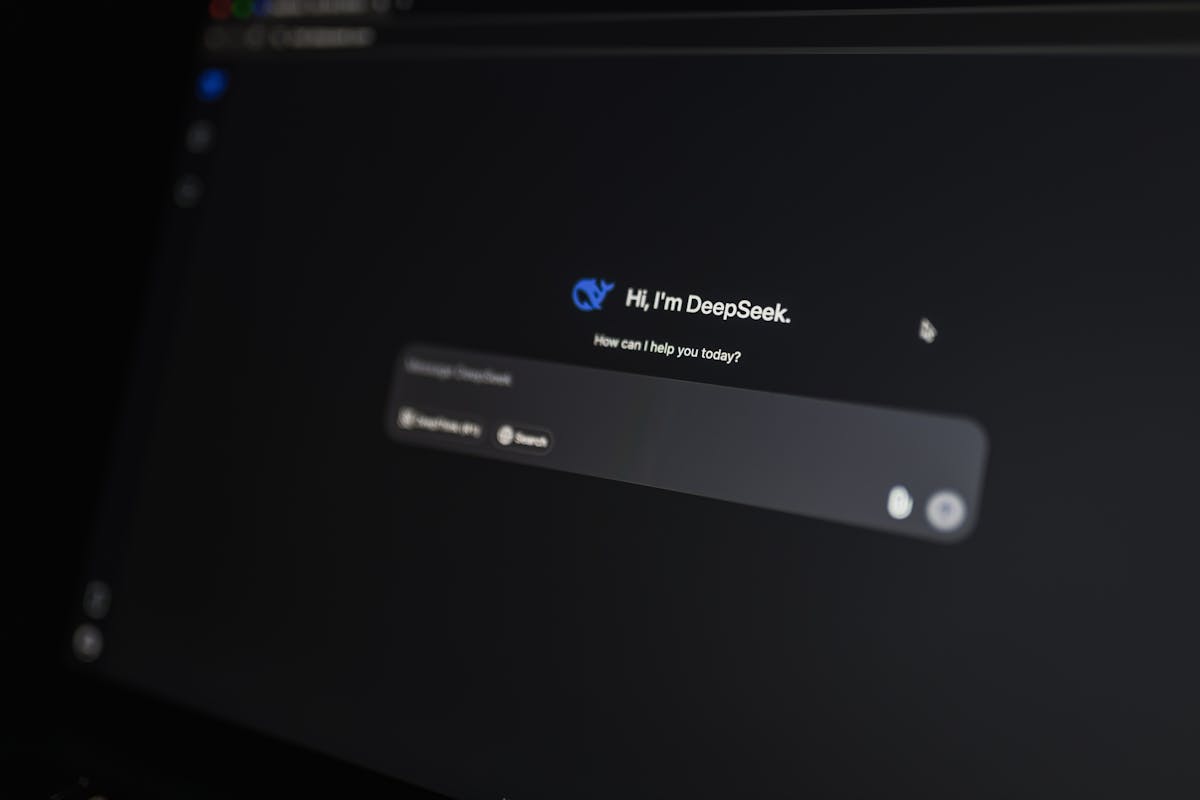

To begin with, generative AI is one of the biggest trends shaping this future. These systems can create text, images, music, and even videos. For example, tools like ChatGPT and image generators show how advanced neural networks basics are being applied in real life. As a result, content creation and problem-solving are becoming faster and more automated.

In addition, smarter AI models are being developed with better accuracy and understanding. These models can learn from smaller datasets and still deliver strong performance. Therefore, AI is becoming more accessible and efficient.

Another major trend is Edge AI, where intelligence is moved closer to devices like smartphones, cameras, and IoT systems. Consequently, processing becomes faster and more secure since data does not always need to travel to the cloud.

Finally, automation trends are expanding rapidly across industries. From customer service to manufacturing, neural networks are helping automate complex tasks. As a result, businesses are improving efficiency and reducing manual effort.

Overall, this deep learning guide future shows that neural networks will continue to play a central role in shaping intelligent systems across the world.

Conclusion

Neural networks basics are essential for understanding how modern AI systems think, learn, and make decisions. Moreover, they form the foundation of many advanced technologies, from simple pattern recognition to complex deep learning guide systems. As a result, neural networks have completely transformed how machines interact with data and solve real-world problems.

As AI continues to evolve rapidly, learning these fundamentals gives you a strong advantage. In addition, it helps you build a career in fields like data science, machine learning, and automation. Whether you are just starting out or already exploring AI models for professional growth, this knowledge acts as a solid starting point.

Therefore, the best way forward is to practice. Start with small projects, experiment with tools like TensorFlow or PyTorch, and gradually explore real neural network applications. Over time, you will gain confidence and deeper understanding.

Ultimately, the future of AI is already here—and it is powered by neural networks.

FAQs (People Also Ask Section)

1. What are neural networks in simple words?

Neural networks are computer systems designed to learn from data by mimicking how the human brain processes information. Moreover, they use connected nodes to identify patterns and make predictions. As a result, machines can solve problems like image recognition and language understanding.

2. Why are neural networks important in AI?

Neural networks are important because they help AI models learn from large datasets. In addition, they improve accuracy over time by identifying hidden patterns. Therefore, they make modern AI systems smarter, faster, and more reliable.

3. What are real-world neural network applications?

Neural networks are used in many industries today. For example, they power healthcare diagnostics, fraud detection in finance, self-driving cars, voice assistants, and recommendation systems. Consequently, they play a major role in everyday digital experiences.

4. What is the difference between machine learning and neural networks?

Machine learning is a broad field of AI that focuses on teaching machines from data. However, neural networks are a specific subset of machine learning that uses layered structures for deep learning. Therefore, neural networks are more advanced and powerful for complex tasks.

5. Do neural networks require coding?

Yes, building neural networks usually requires programming knowledge, especially in Python. Moreover, frameworks like TensorFlow and PyTorch make it easier to design and train models. As a result, even beginners can start learning with basic coding skills.